Historical marine data

|

Familiarisez-vous avec le scénario du cas d’utilisation. |

Scénario

This narrative was developed as a basis for practical exercises in the biodiversity data mobilization course and the exercise concept and content was developed initially by David Bloom and Amanda Whitmire for use in CESP2022-009 (Expanding the Network: Bringing marine research stations and library collections into the data-sharing community).

This is a partially fictionalized scenario based on a real project and datasets. It is intended for instructional purposes only. Use case data may not be reproduced for any other purpose without the permission of the Harold A. Miller Library at Hopkins Marine Station (HMS-Library@lists.stanford.edu).

Description

Hopkins Marine Station (HMS) is the marine laboratory for Stanford University. The station is located two-hours south of the main Stanford campus on the western coast of California. With one of the oldest marine laboratories in the United States, the library at HMS houses an extensive collection of historical marine data in the form of ship manifests, logs, fieldbooks, academic papers (including student papers), and other analog materials.

While contributing to several large-scale digitization and online repository projects, the Head Librarian met a local GBIF Node Manager who encouraged HMS to begin the process of digitizing and publishing the library’s extensive collection of biodiversity data to GBIF and other data aggregators. Recently, the Librarian has made it a priority to begin the process to transfer the data from field books, note cards, and into a new collection of image files (.jpg), pdf documents and spreadsheets containing event and occurrence data. The new files, and the data contained within them, will be organized into the library’s new content management system.

The process of digitizing and publishing HMS data will take time. The library budget is allocated annually and does not include funds for digitization or to hire new staff for the project. Human resources are limited to the Head Librarian (who has a PhD in Oceanography), a Library Assistant with a MS in Library and Information Science, an undergraduate work-study student who is paid for 10 hours per week, and two local retirees who volunteer at the library twice weekly in four-hour blocks.

The Librarian and Assistant already possess a large-format scanner and the necessary software to scan the library’s wide variety of documents, including materials with bindings. Together, they have determined that digitization should begin with the work of one of HMS’ best known collectors, Willis G. Hewatt. They have also developed a preliminary workflow for the job:

Identify a specific locality or transect within the Hewatt archive and then identify any associated historic or modern faculty or student papers, journal articles, books, and associated resources (e.g., index cards, maps, field books).

The Library Assistant will pull all of the analogue resources from the HMS archives and then scan them into appropriate digital formats, using optical character recognition (OCR) whenever possible. Data formatted as tables will be converted and imported into a common spreadsheet.

The volunteers will review the OCR’d files for conversion errors and to confirm that the tables match the analogue source materials. Any living authors will be consulted when questions arise in regard to their work.

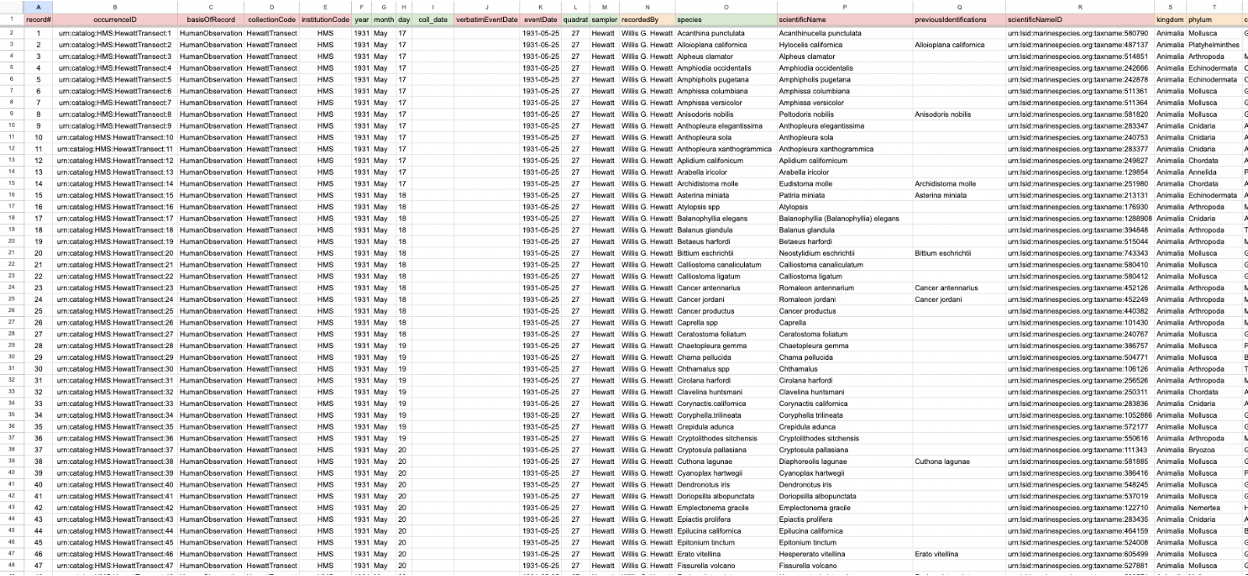

Under the supervision of the Library Assistant and Head Librarian (indirectly), the work-study student will map the data tables to Darwin Core fields adding information from associated papers and other written resources (e.g., journal articles, manuscripts). The Head Librarian will provide a final review to confirm the accuracy and completeness of the data.

All standardized tables will be sent to the GBIF Node Manager who will provide access to the Node IPT and who will register the Hopkins Marine Station with GBIF and ensure that the datasets are published correctly to both GBIF and the Ocean Biodiversity Information System (OBIS).

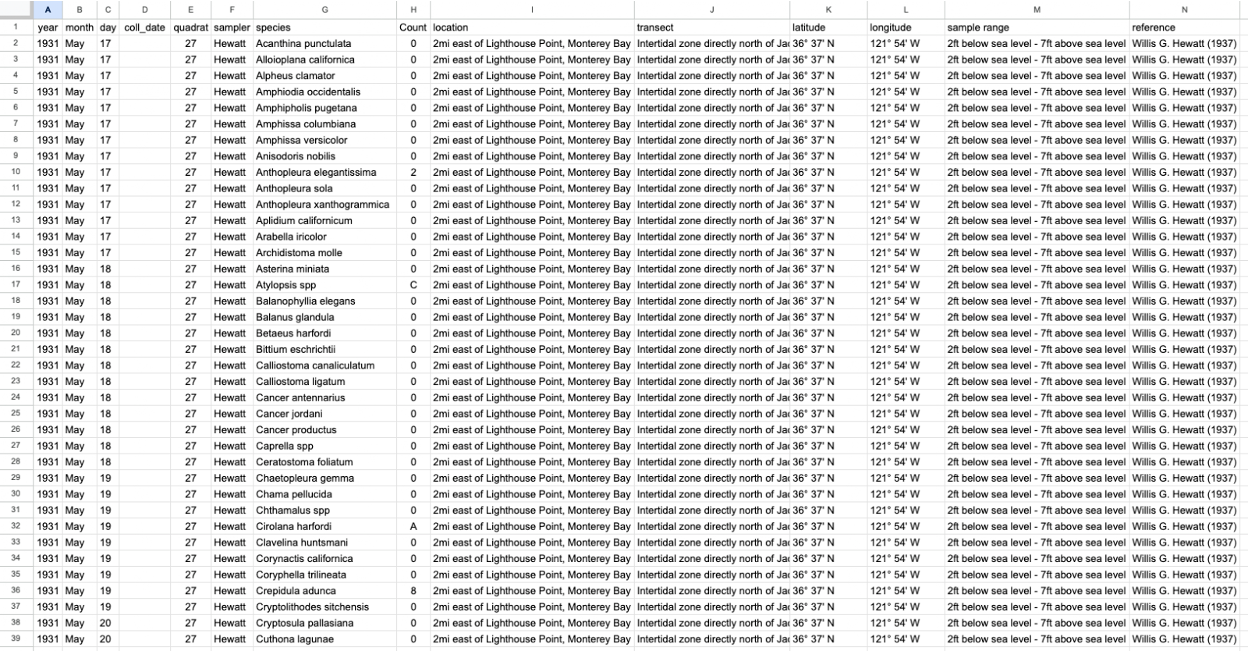

Collection de données originale

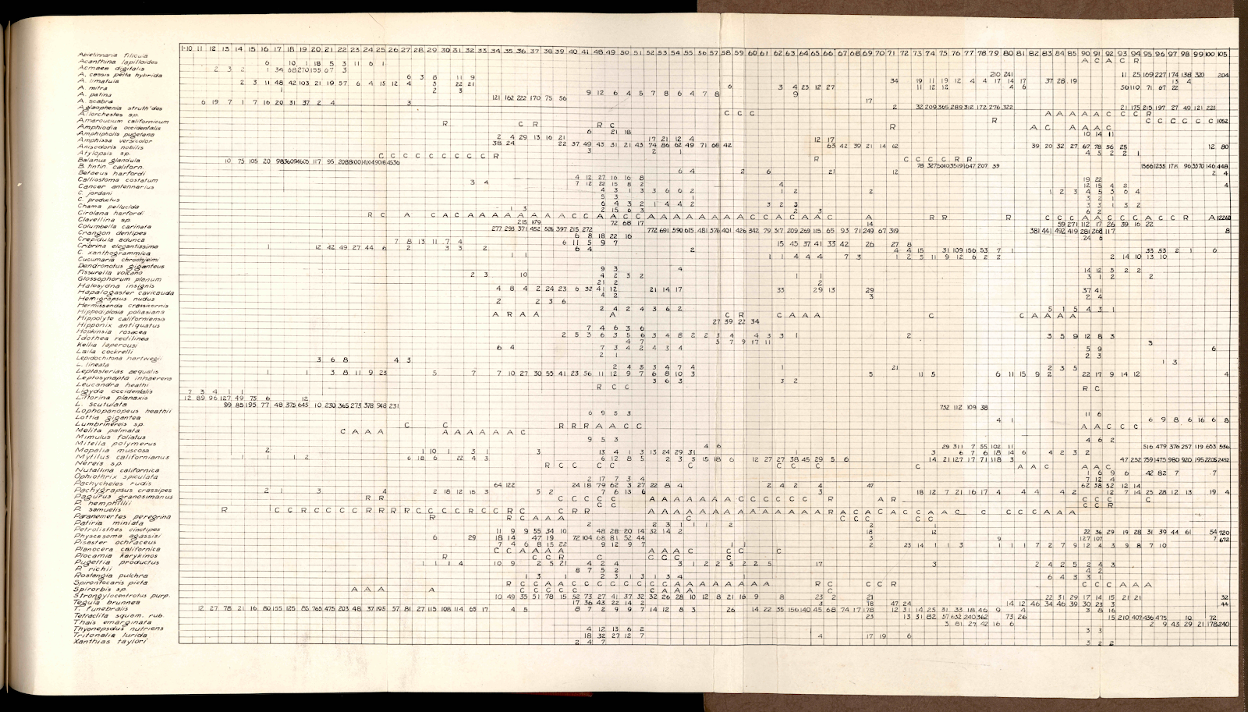

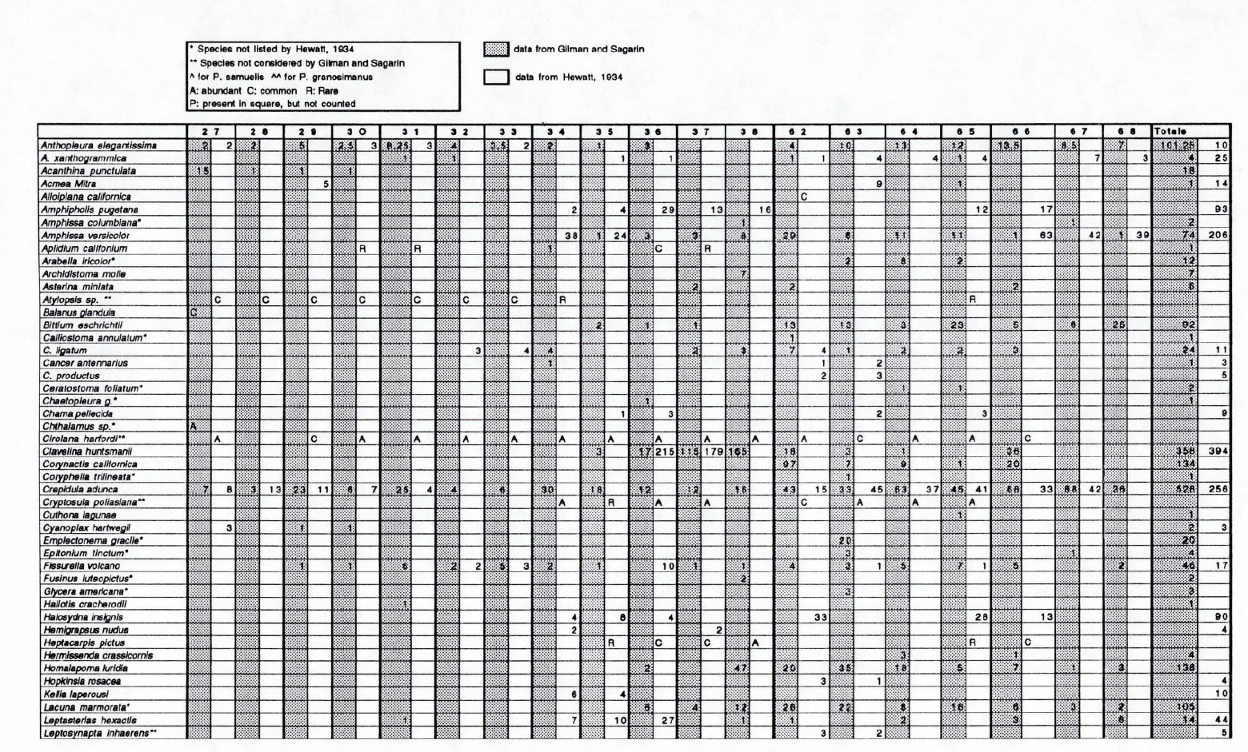

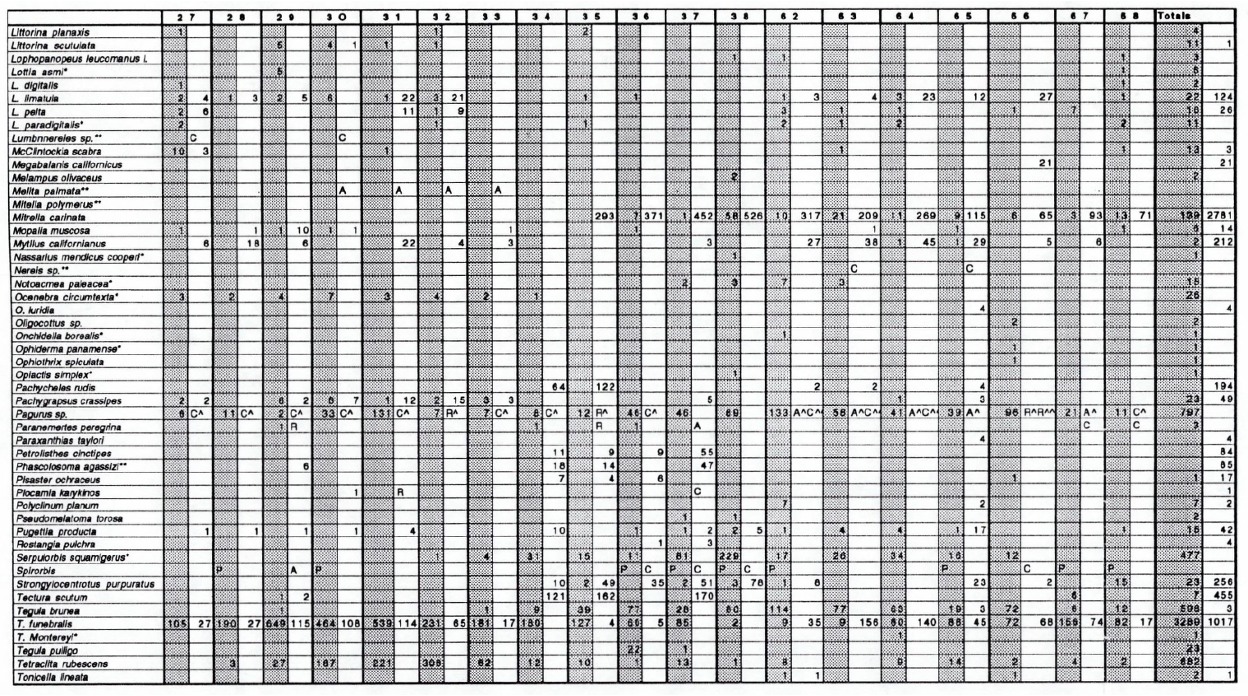

The first collection of data selected by the Head Librarian is the “Hewatt Transect”, a qualitative observational record of the intertidal zone directly adjacent to the HMS facility. Data along the 108 yard transect were sampled and reported in 1 square yard quadrats. While observations were recorded continuously from 1931 to 1933, with additional observations added in 1941, only a handful of selected ‘representative’ quadrats were sampled consistently (Figure 1). A student paper in 1993 (Gilman & Sagarin) sought to resurvey the same transect, although only part of the original transect was observed (Figures 2a and 2b). Both projects entered into the survey with a list of species expected to be present within the transect. Hewatt’s dissertation and Gilman & Sagarin’s student paper were preserved by the library, along with related published peer-reviewed papers. The data necessary to publish to GBIF, OBIS and other data aggregators is spread across all of the resources in the library archive.

Data conversion and aggregation

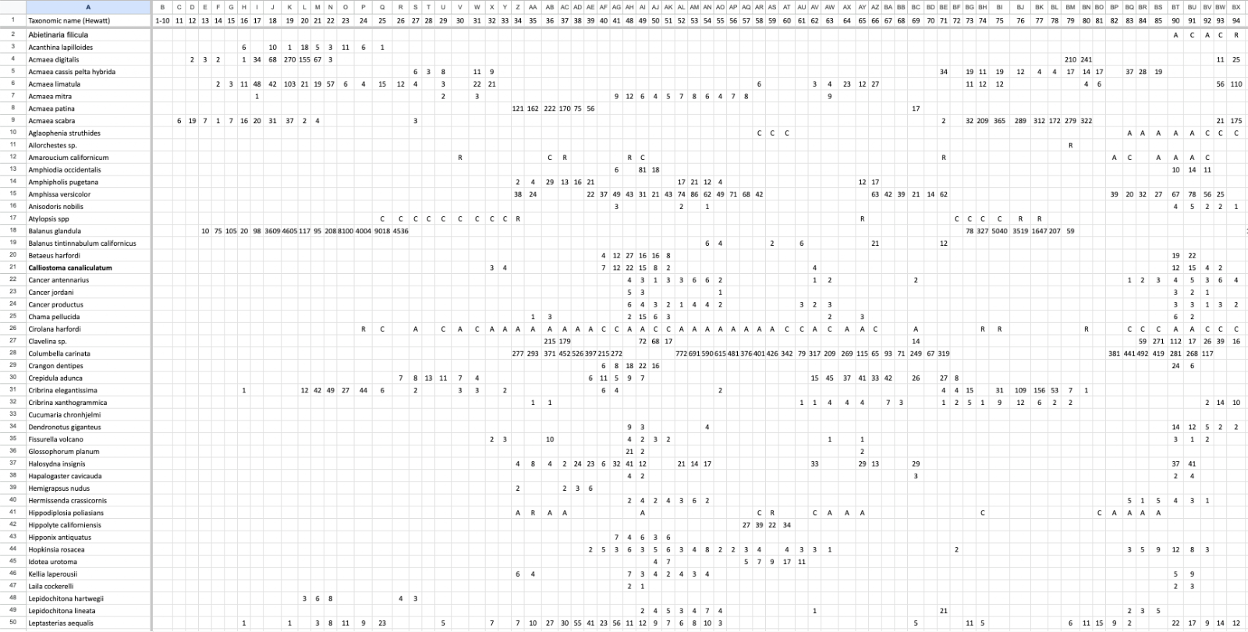

Both Hewatt and Gilman/Sagarin recorded data in a table format for species for each transect. These “lists” were converted into a format aligned to an occurrence dataset(Figures 3 and 4).

Data Assessment

As a part of the Head Librarian’s review the converted and aggregated tables included special attention to specific data which describe (see Figure 5):

-

Observation depth

-

Date and time

-

Geographic coordinates

-

Locality descriptions

-

Research methods

-

Species Identifiers

References

-

Hewatt, Willis Gilliland. “Ecological Studies on Selected Marine Intertidal Communities of Monterey Bay.” Ph.D., Stanford University, 1934. https://purl.stanford.edu/wr792fj0469.

-

Gilman, Sarah E., and Raphael D. Sagarin. “Temperature Correlated Long-Term Faunal Changes in the Rocky Intertidal.” Hopkins Marine Station Student Papers. Stanford (Calif.), 1993. Stanford Digital Repository. https://purl.stanford.edu/jd254tq7230.

Exercises

Download the exercise sheet. (MS Word, 2.8 MB)

Exercice 1

Saisie de données

-

Download UC-MS-2-ForCapture.pdf. (?? KB)

-

Download UC-MS-2-template.xlsx. (82 KB)

-

What data structure would you use to reflect the data?

-

Create a spreadsheet using this structure and the data.

-

Utilisez la feuille d’exercice pour fournir vos réponses et soumettez la feuille de calcul créée à l’étape précédente.

Exercice 2

Gestion des données

-

Download UC-MS-2-ForCleaning.csv. (82 KB)

-

Évaluez le jeu de données et identifiez quels types d’erreurs il contient.

-

Identifiez différentes façons de corriger ces problèmes, et effectuez ces corrections pour autant d’erreurs présentes que possible.

-

Utilisez la feuille d’exercice pour fournir vos réponses et soumettre la feuille de calcul.

Exercice 3

Publication de données

-

Download UC-MS-3-ForPublication.csv. (402 KB)

-

Utilisez l’installation IPT indiquée précédemment pour publier le jeu de données fourni.

-

Utilisez la feuille d’exercice pour fournir vos réponses, ainsi qu’un lien vers le jeu de données publié.